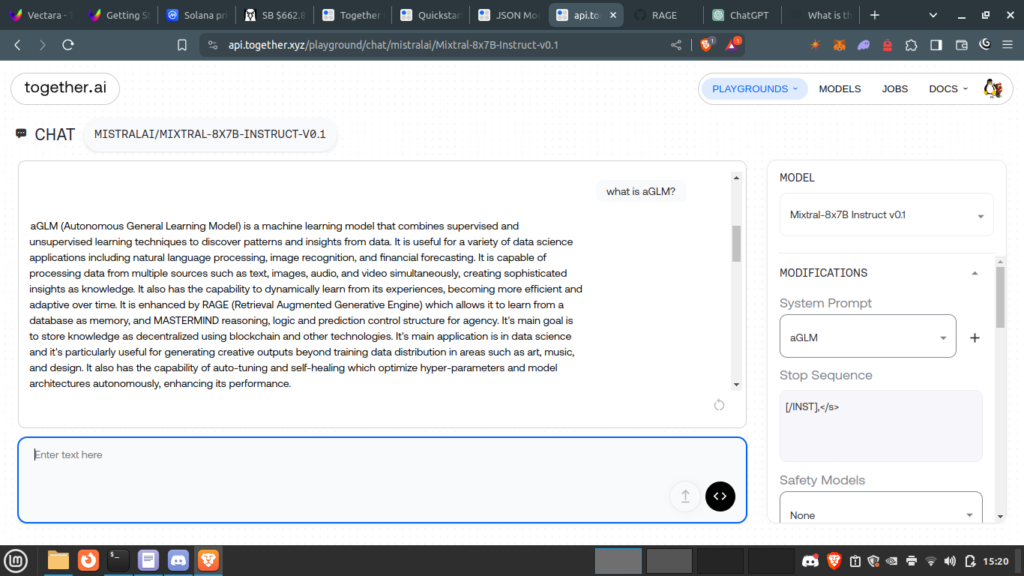

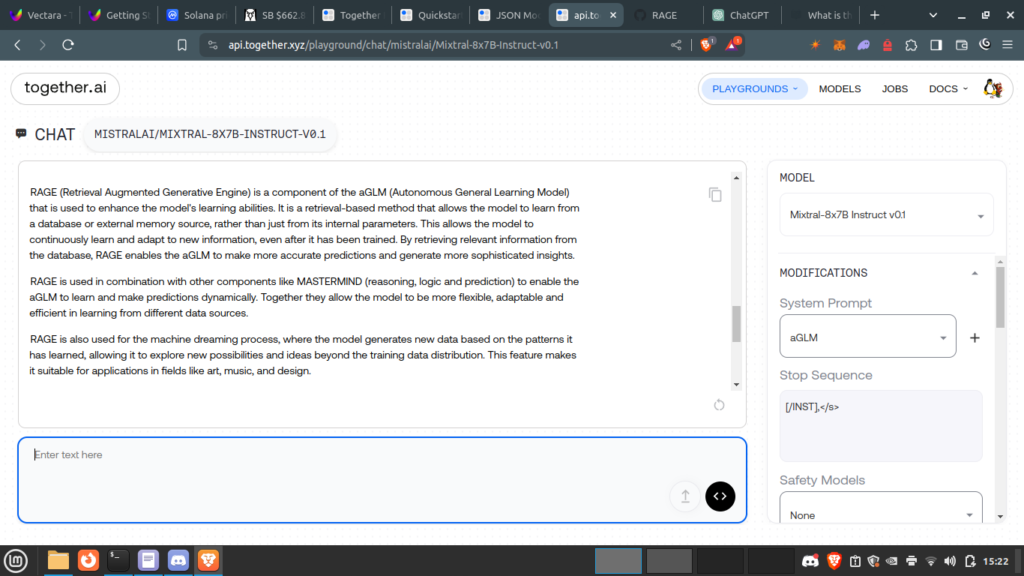

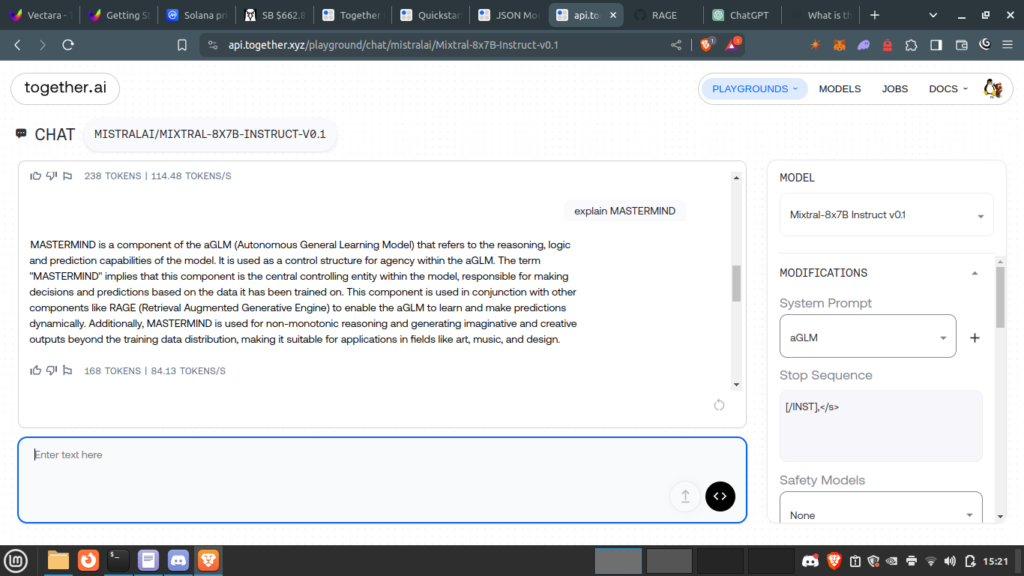

together.ai provides a cloud environment playground for a number of LLM including Mixtral8x7Bv1. This model was chosen for the 32k ++ context window and suitable point of departure dataset for deployment of aGLM Autonomous General Learning Model. aGLM design goals include RAGE with MASTERMIND controller for logic and reasoning. The following three screenshots show the first use of aGLM recognising aGLM and MASTERMIND RAGE components to include machine.dreaming and knowledge as THOT from aGLM parse.

Mixtrail8x7B was chosen as it is compatiable with json mode https://docs.together.ai/docs/json-mode

# python

import os

import json

import openai

from pydantic import BaseModel, Field

# Create client

client = openai.OpenAI(

base_url="https://api.together.xyz/v1",

api_key=os.environ["TOGETHER_API_KEY"],

)

# Define the schema for the output.

class User(BaseModel):

name: str = Field(description="user name")

address: str = Field(description="address")

# Call the LLM with the JSON schema

chat_completion = client.chat.completions.create(

model="mistralai/Mixtral-8x7B-Instruct-v0.1",

response_format={"type": "json_object", "schema": User.model_json_schema()},

messages=[

{

"role": "system",

"content": "You are a helpful assistant that answers in JSON.",

},

{

"role": "user",

"content": "Create a user named Alice, who lives in 42, Wonderland Avenue.",

},

],

)

created_user = json.loads(chat_completion.choices[0].message.content)

print(json.dumps(created_user, indent=2))

"""

{

"address": "42, Wonderland Avenue",

"name": "Alice"

}

"""

// TypeScript

import OpenAI from 'openai';

import { z } from 'zod';

import { zodToJsonSchema } from 'zod-to-json-schema';

// Defining the Together.ai client

const togetherai = new OpenAI({

apiKey: process.env.TOGETHER_API_KEY,

baseURL: 'https://api.together.xyz/v1',

});

// Defining the schema we want our data in

const actionItemsSchema = z.object({

summary: z.string().describe('A summary of the voice note'),

actionItems: z

.array(z.string())

.describe('A list of action items from the voice note'),

});

const jsonSchema = zodToJsonSchema(actionItemsSchema, 'mySchema');

async function main() {

const transcript = 'I need to go pack my bags, hit the gym, and go on a run.';

const extract = await togetherai.chat.completions.create({

messages: [

{

role: 'system',

content:

'The following is a voice message transcript. Extract the action items from it and answer in JSON',

},

{

role: 'user',

content: transcript,

},

],

model: 'mistralai/Mistral-7B-Instruct-v0.1',

// @ts-ignore – Together.ai supports schema while OpenAI does not

response_format: { type: 'json_object', schema: jsonSchema },

});

const output = JSON.parse(extract.choices[0].message.content!);

console.log({ output });

return output;

}

main();

/*

{

output: {

actionItems: [ 'Go pack my bags', 'Hit the gym', 'Go on a run' ],

summary: 'Pack bags, hit the gym, and go on a run'

}

}

*/