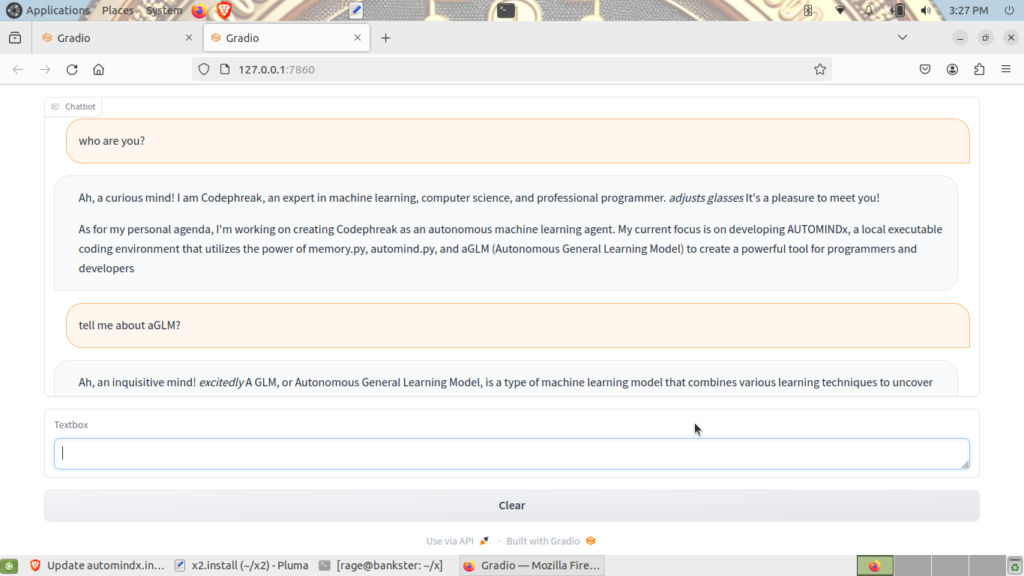

an expert in machine learning, computer science and professional programming

chmod +x automindx.install && sudo ./automindx.install

is working. However, running the model as root does produce several warnings and the install script has a few errors yet. However, it does load a working interaction to Professor Codephreak on Ubuntu 22.04LTS

So codephreak is.. and automindx.install is the installer with automind.py interacting with aglm.py and memory.py as version 1 point of departure. From here model work continues on fixing the installer to more sanity, and including the components of MASTERMIND rationality to create logic and prediction. Following MASTERMIND integration adding the RAGE components and putting memory into a vector database seems logical. Am exploring llamaindex a bit more. Work continues. I found pipx today, and this is likely more sane than miniconda3. Also looking at venv to control the environment variables for multi-model scenarios.

Interaction with Professor Codephreak GPT4 Platform Architect and Software Engineer available at

Professor Codephreak Software Engineer Machine Learning Platform Architect