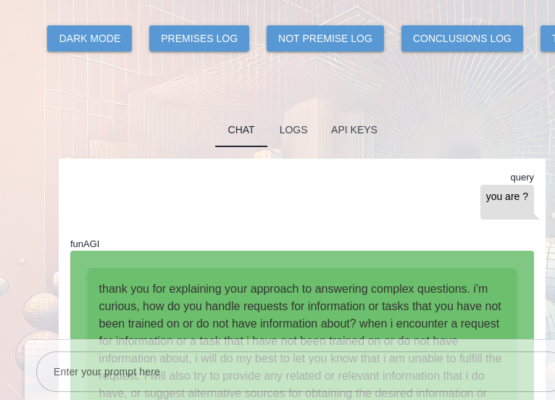

putting the fun into a fundamental augmented general intelligence framework as funAGI

funAGI is a development branch of easyAGI. easyAGI was not being easy and SimpleMind neural network was proving to not be simple. For that reason is was necessary to remove reasoning.py and take easyAGI back to its roots of BDI Socratic Reasoning from belief, desire and intention. So this back to basics release should be taken as a verbose logging audit of SocraticReasoning and logic to create fundamental funAGI as a modular point of departure towards a reasoning machine and an autonomous general intelligence framework. funAGI is an exercise in AGI fundamentals. Here, AGI is defined as augmented general intelligence.

Central to the reasoning engine is an understanding of draw_conclusion(self)

def draw_conclusion(self):

if not self.premises:

self.log('No premises available for logic as conclusion.', level='error')

return "No premises available for logic as conclusion."

premise_text = "\n".join(f"- {premise}" for premise in self.premises)

prompt = f"Premises:\n{premise_text}\nConclusion?"

self.logical_conclusion = self.chatter.generate_response(prompt)

self.log(f"{self.logical_conclusion}") # Log the conclusion directly

if not self.validate_conclusion():

self.log('Invalid conclusion. Please revise.', level='error')

return self.logical_conclusion

The draw_conclusion method in the SocraticReasoning class processes the premises and generates a conclusion.

Workflow:

Check for Premises:

method begins by checking if there are any premises available (if not self.premises:).

If no premises are available, it logs an error message (self.log('No premises available for logic as conclusion.', level='error')) and returns the string "No premises available for logic as conclusion.".

Prepare Premise Text:

If premises are available, it constructs a string (premise_text) that lists all the premises, each prefixed with a dash (-). This is done using a join operation on the list of premises ("\n".join(f"- {premise}" for premise in self.premises)).

Formulate the Prompt:

It then creates a prompt string for the language model by combining the premise text with a query for the conclusion (prompt = f"Premises:\n{premise_text}\nConclusion?").

Generate the Conclusion:

method calls the generate_response method of the chatter object (an instance of a class like GPT4o, GroqModel, or OllamaModel) with the formulated prompt. This method interacts with an external AI service to generate a conclusion (self.logical_conclusion = self.chatter.generate_response(prompt)).

Log the Conclusion:

The generated conclusion is logged directly (self.log(f"{self.logical_conclusion}")).

Validate the Conclusion:

It then validates the conclusion using the validate_conclusion method. This checks if the conclusion is logically valid using truth tables (if not self.validate_conclusion():).

If the conclusion is not valid, it logs an error message (self.log('Invalid conclusion. Please revise.', level='error')).

Return the Conclusion:

Finally, the method returns the generated conclusion (return self.logical_conclusion).+--------------------+

| draw_conclusion |

+--------------------+

|

v

+-----------------------------+

| Check if premises are empty |

+-----------------------------+

|

v

+-------------------------------------------+

| Construct premise_text by joining premises |

+-------------------------------------------+

|

v

+-----------------------------------+

| Formulate the prompt with premises |

+-----------------------------------+

|

v

+-------------------------------------------------+

| Generate response from chatter (external AI API) |

+-------------------------------------------------+

|

v

+----------------------+

| Log the conclusion |

+----------------------+

|

v

+--------------------------------+

| Validate the generated conclusion |

+--------------------------------+

|

v

+--------------------------+

| Return the logical decision |

+--------------------------+from input premises:

- “Premise 1: All humans are mortal.”

- “Premise 2: Socrates is a human.”

The prompt created would be:

- All humans are mortal.

- Socrates is a human.

Conclusion?”

response:

"Socrates is mortal."

Welcome to the funAGI project. The funAGI project was designed to create a solid fundamental understanding of AGI reasoning from SocraticReasoning and logic . More information about funAGI can be found at

funAGI fundamental augmented general intelligence